Prompt Engineering as a Software Design Skill: Best Practices for Engineers

The rise of large language models (LLMs) has fundamentally changed how we approach application development. What once required complex algorithmic design or extensive data training can now, in many cases, be achieved with a well-crafted query. This shift brings a new discipline to the forefront for engineers: Prompt Engineering as a Software Design Skill. It’s no longer just about knowing how to ask a question; it’s about designing the interactions with AI in a way that is robust, predictable, and scalable, much like designing any other component of a software system.

For engineers building AI-powered applications, understanding and mastering prompt engineering is becoming as critical as knowing database schemas or API design. It dictates the behavior, reliability, and ultimate value of your LLM integration. Let’s delve into some best practices that elevate prompt engineering from a trial-and-error process to a core software design competency.

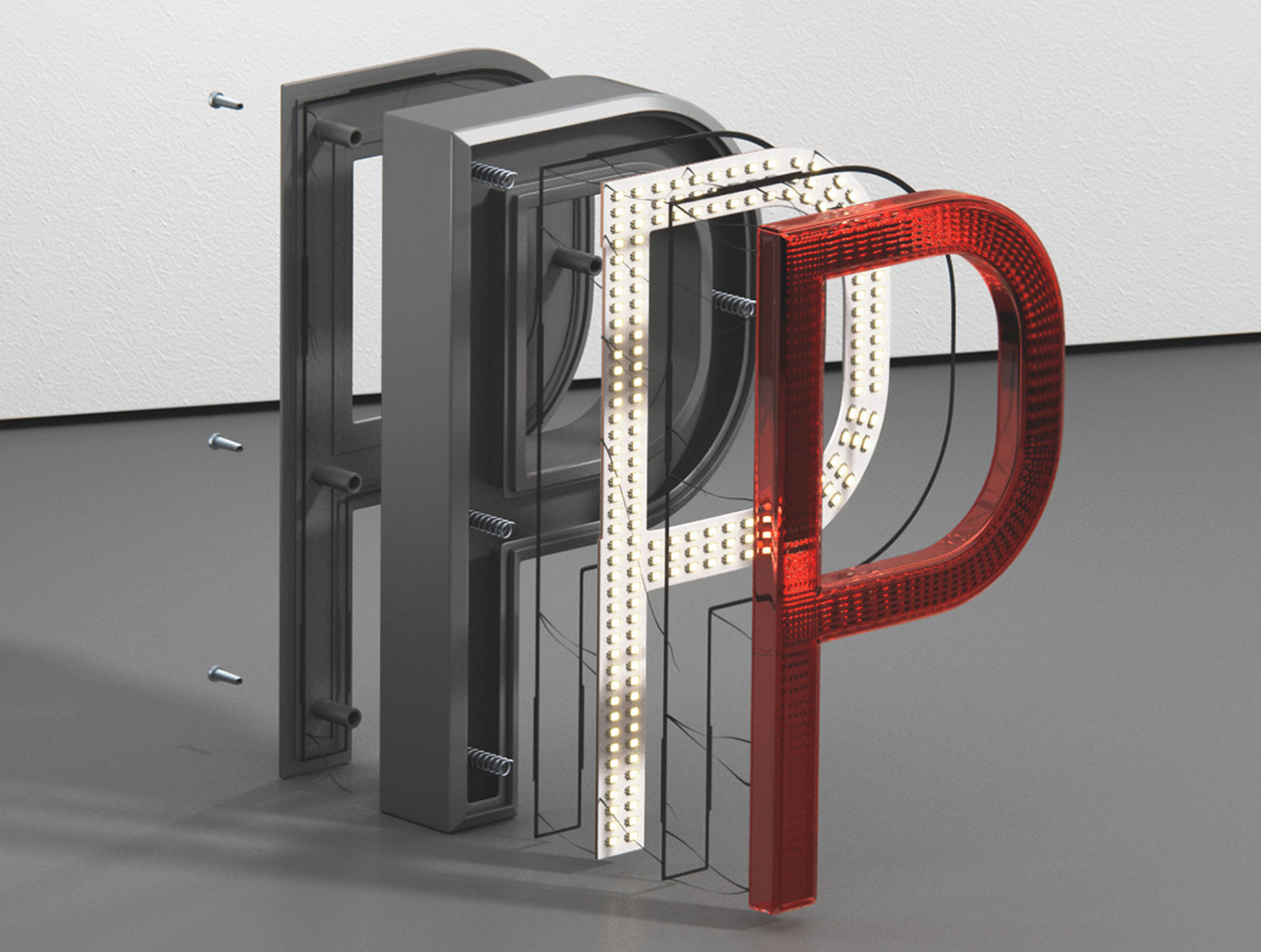

Treat Prompts as Code: Version Control and Modularity

Just as you wouldn’t deploy an application without version control, prompts — especially those driving critical application logic — deserve the same rigor. Effective prompt engineering recognizes that prompts are a form of executable specification. They directly influence system behavior and require careful management.

-

Version Control Your Prompts

Store your prompts in a version control system (like Git) alongside your application code. This allows for tracking changes, reverting to previous versions, and collaborative development. When a model update changes behavior, you’ll have a clear history of what prompt led to what outcome.

-

Modular Prompt Design

Break down complex tasks into smaller, manageable sub-prompts. Instead of one monolithic prompt for a multi-step process, chain together smaller, focused prompts. This enhances readability, makes debugging easier, and promotes reuse. For example, separate a prompt for “extracting entities” from one for “summarizing an article.” This modularity is key for robust AI development workflows.

Clarity, Constraints, and Context: Designing for Predictability

The goal of designing intelligent systems is to achieve predictable outcomes from unpredictable inputs. Prompts are your primary tool for guiding the LLM towards that predictability.

-

Be Explicit and Unambiguous

Assume the LLM knows nothing beyond what you explicitly tell it. Avoid vague language. Specify desired output formats (JSON, XML, bullet points) whenever possible. For instance, instead of “Summarize this,” try “Summarize the following article into three concise bullet points, focusing on the key arguments. Respond only with the bullet points, no additional prose.”

-

Define Boundaries and Constraints

Clearly state any limitations or boundaries for the LLM’s response. This includes length limits, specific output types, or forbidden topics. Setting these guardrails upfront helps prevent undesired outputs and ensures reliable AI outputs for your application. This is especially crucial for sensitive applications or when dealing with user-generated content.

-

Provide Sufficient Context

The LLM operates on the context you provide. Ensure your prompt includes all necessary background information, previous turns in a conversation, or relevant data that the model needs to make an informed decision or generate a relevant response. This might involve fetching relevant data from a vector database or including previous chat history.

Iterative Refinement and Evaluation

Prompt engineering is an iterative process. Rarely will your first prompt be your best prompt. It requires continuous testing and refinement, much like any other software component.

-

Develop a Testing Methodology

Create a suite of test cases (inputs and expected outputs) for your prompts. Automate prompt evaluation where possible. This is crucial for detecting regressions when models update or prompts are modified. Consider integrating prompt tests into your CI/CD pipeline.

-

Monitor and Gather Feedback

Once deployed, monitor the performance of your LLM-integrated features. Collect user feedback on the quality and relevance of the AI-generated content. This feedback loop is invaluable for identifying areas where your prompts can be improved to better meet user needs and achieve the desired reliable AI outputs.

Embracing prompt engineering as a core software design skill is no longer optional for engineers working with AI. It’s a fundamental capability that bridges the gap between raw LLM power and robust, valuable applications. By applying software engineering best practices – modularity, version control, clear specifications, and rigorous testing – to your prompts, you ensure that your LLM integration is not just functional, but truly foundational to your application’s success and future evolution.