The rapid evolution of artificial intelligence, from sophisticated large language models to complex computer vision systems, is fundamentally reshaping industries. While algorithmic breakthroughs often grab headlines, the relentless progress in the underlying hardware is arguably just as critical. Without continuous developments in hardware that power AI computing, many of today’s most ambitious AI projects would remain theoretical, struggling against the sheer computational demands. Let’s delve into how silicon is keeping pace with intelligence.

The GPU’s Enduring Reign: Parallel Powerhouses

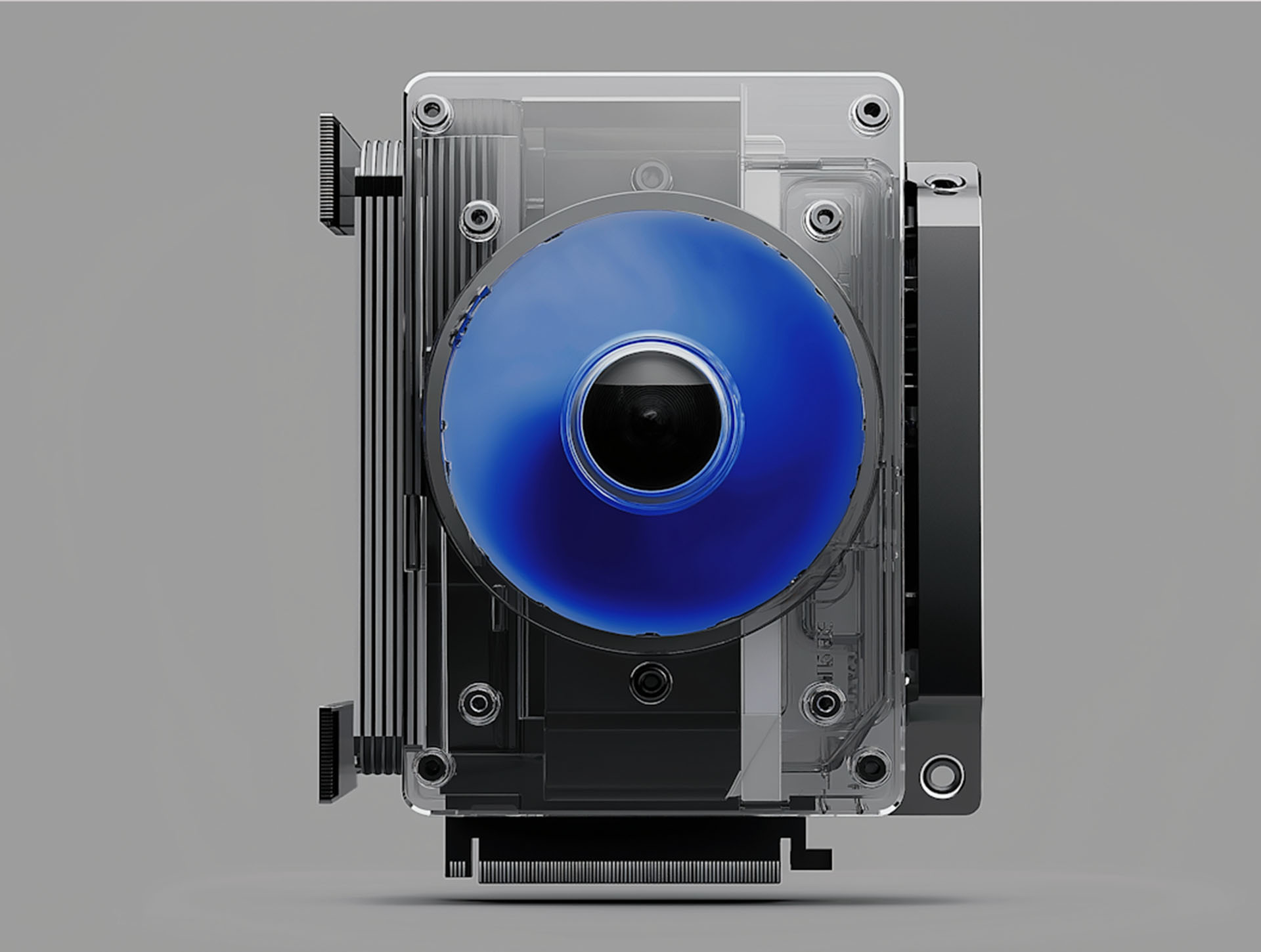

For years, Graphics Processing Units (GPUs) have been the workhorse of AI, particularly deep learning. NVIDIA’s CUDA platform, in conjunction with its powerful GPU architecture, established an early and strong lead. The inherent parallel processing capabilities of GPUs, designed for rendering complex graphics simultaneously, proved perfectly suited for the matrix multiplications and tensor operations central to neural network training. Modern data center GPUs, such as NVIDIA’s H100 or AMD’s Instinct MI300X, are not just about raw core count; they feature specialized tensor cores designed to accelerate AI workloads specifically, delivering astonishing throughput for both training and inference tasks. This evolution has solidified their position as the go-to solution for high-performance computing for AI.

Purpose-Built Silicon: The Rise of Specialized AI Accelerators

While GPUs excel, the pursuit of greater efficiency and lower power consumption has led to a proliferation of specialized AI chips, often referred to as AI accelerators. These Application-Specific Integrated Circuits (ASICs) are custom-designed for particular AI tasks, sacrificing general-purpose flexibility for extreme optimization. Google’s Tensor Processing Units (TPUs) are a prime example, built from the ground up to accelerate TensorFlow workloads in their data centers. We’re also seeing a surge in edge AI hardware, where devices like smartphones, smart cameras, and autonomous vehicles require powerful yet energy-efficient inference capabilities. Companies are designing custom chips that integrate neural processing units (NPUs) or dedicated AI engines directly onto the SoC (System on Chip), enabling real-time AI processing without constant cloud connectivity.

Key Drivers for Specialized AI Chips:

- Energy Efficiency: Reducing power consumption per operation, crucial for mobile and edge devices.

- Cost Optimization: Tailoring silicon for specific workloads can lead to lower unit costs at scale.

- Performance per Watt: Delivering maximum computational power within strict thermal and power envelopes.

- Reduced Latency: Enabling real-time decision-making for critical applications like autonomous driving.

Beyond Von Neumann: Exploring Neuromorphic Computing

Looking further ahead, neuromorphic computing represents a radical departure from traditional computer architectures. Inspired by the human brain, these systems aim to mimic biological neurons and synapses, processing and storing information in a highly integrated and energy-efficient manner. Projects like IBM’s NorthPole and Intel’s Loihi chips are experimenting with event-driven, asynchronous processing, where computation only occurs when data changes, leading to potentially massive gains in power efficiency for certain AI tasks, particularly those involving pattern recognition and continuous learning. While still largely in research phases, neuromorphic architectures hold immense promise for future AI applications that require ultra-low power consumption and continuous adaptation.

Memory and Interconnects: The Unsung Heroes

It’s not just the processors; memory and interconnects play a pivotal role in enabling powerful AI computing. AI models are notoriously data-hungry, requiring vast amounts of information to be moved rapidly between memory and processing units. High-Bandwidth Memory (HBM), which stacks multiple DRAM dies vertically, offers significantly greater throughput than traditional DDR memory. Furthermore, high-speed interconnects like NVIDIA’s NVLink and the emerging Compute Express Link (CXL) are crucial for overcoming the data bottleneck, allowing multiple GPUs or specialized AI accelerators to communicate efficiently within a server or across nodes in a cluster. These advancements ensure that the formidable processing power isn’t starved for data, allowing for seamless training of increasingly larger and more complex models.

The pace of innovation in hardware for AI computing shows no signs of slowing. From the continued evolution of powerful GPU architecture to the emergence of highly specialized AI accelerators and the intriguing promise of neuromorphic computing, each development pushes the boundaries of what’s possible. As AI models grow in complexity and pervade every aspect of technology, the foundational advancements in silicon will remain the bedrock of this transformative era.